Naming AI products is a bit hit-or-miss. Some names sound as if they were polished in a branding lab for six months, while others feel as though they were just pulled from a hat. Claude has a certain elegance. Gemini is fine. ChatGPT, on the other hand, is a rubbish name and only became familiar through brute force when it was suddenly absolutely everywhere.

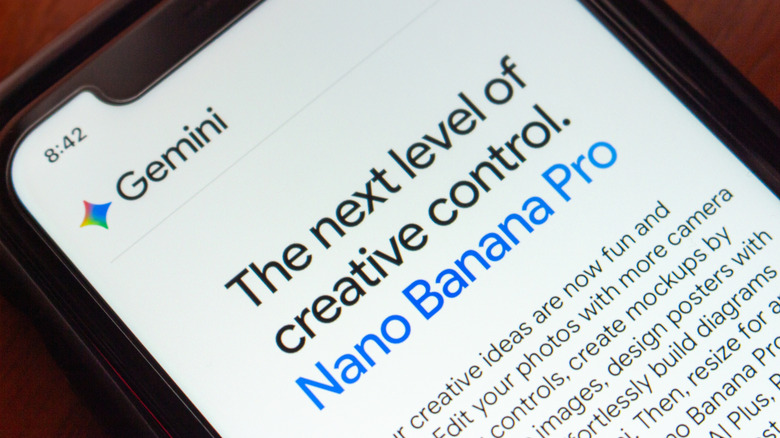

Nano Banana, Google Gemini’s AI image generator that enables anyone to create realistic-looking pictures, is called Gemini 3 Pro Image Preview in Google’s technical documentation. However, the name “Nano Banana” is both more official and less official than you might think. Google openly calls it Nano Banana Pro — and even Nano Banana 2, now — but that wasn’t the original plan.

Nano Banana Pro has such a weird name because that moniker was never intended to be taken seriously. The team needed a temporary name for Arena.ai (then called LMArena), the crowdsourced model-testing platform where systems are compared anonymously. The codename wasn’t chosen until the last minute. Product Manager Naina Raisinghani was pushed to come up with something on the spot and suggested Nano Banana. It was a combination of two of her nicknames. “Some of my friends call me Naina Banana, and others call me Nano because I’m short and I like computers. So I just smushed my two nicknames together,” Naina revealed on Google’s blog, The Keyword.

Nano Banana quickly caught on

Despite Google’s attempts to keep its identity secret on Arena.ai, some people were quick to speculate that the highly rated new image generation and editing tool was a Google product. It was initially uploaded to Arena.ai on August 12, 2025. Within days, users were sharing their AI-generated creations on social media. After a week of speculation, a couple of X posts fueled users’ suspicions. Product Lead for Google AI Studio, Logan Kilpatrick, posted a banana emoji, and Naina Raisinghani, the developer behind the name, shared a picture of a banana gaffer-taped to a wall. Nano Banana was officially launched on August 26, 2025, upstaging ChatGPT as the most popular AI image generator.

https://t.co/9Qtne8CWwI pic.twitter.com/ejPA82QCcT

— Naina Raisinghani (@nainar92) August 19, 2025

It’s not the first tech product with “banana” in its name. We might be more familiar with Apple, Blackberry, and Raspberry Pi, but you can also purchase a bananaphone — a banana-shaped Bluetooth headset to pair with your smartphone. There’s also a 2019 research paper with a BANANAS algorithm, which stands for Bayesian Optimization with Neural Architectures for Neural Architecture Search. (You have to respect the contrivance even if it doesn’t quite work.) Tech companies are still naming things after fruit. OpenAI internally used “Strawberry” for the project that became o1, and Meta is currently working on an AI model nicknamed “Avocado.”

Nano Banana may not have been meant as the official name, but it stuck because people liked it. Companies spend fortunes chasing that kind of stickiness, and Google stumbled into it. The model got noticed, the odd codename was memorable, and Google was smart enough not to crush the joke with a committee-approved replacement.