Remote work affects where we can live (farther from the job), our childcare (women do more), and what we wear (more casual).

Also, our grocery shopping changes.

WFH Grocery Shopping

Increasing from 7% to slightly more than 25% between 2019 and 2026, the fraction of our WFH (work from home) days has soared. As a result, for more than 35 million people, where we shop, what we buy, and how much we spend has changed.

Where We Shop

As you might expect, partial and fully remote households do more online grocery shopping. The days they shop also changed with relatively more purchases on weekdays. In addition, they make fewer weekday trips to the store when compared to weekend visits. But still, when they do go to the store, the number of trips increases while their duration is down.

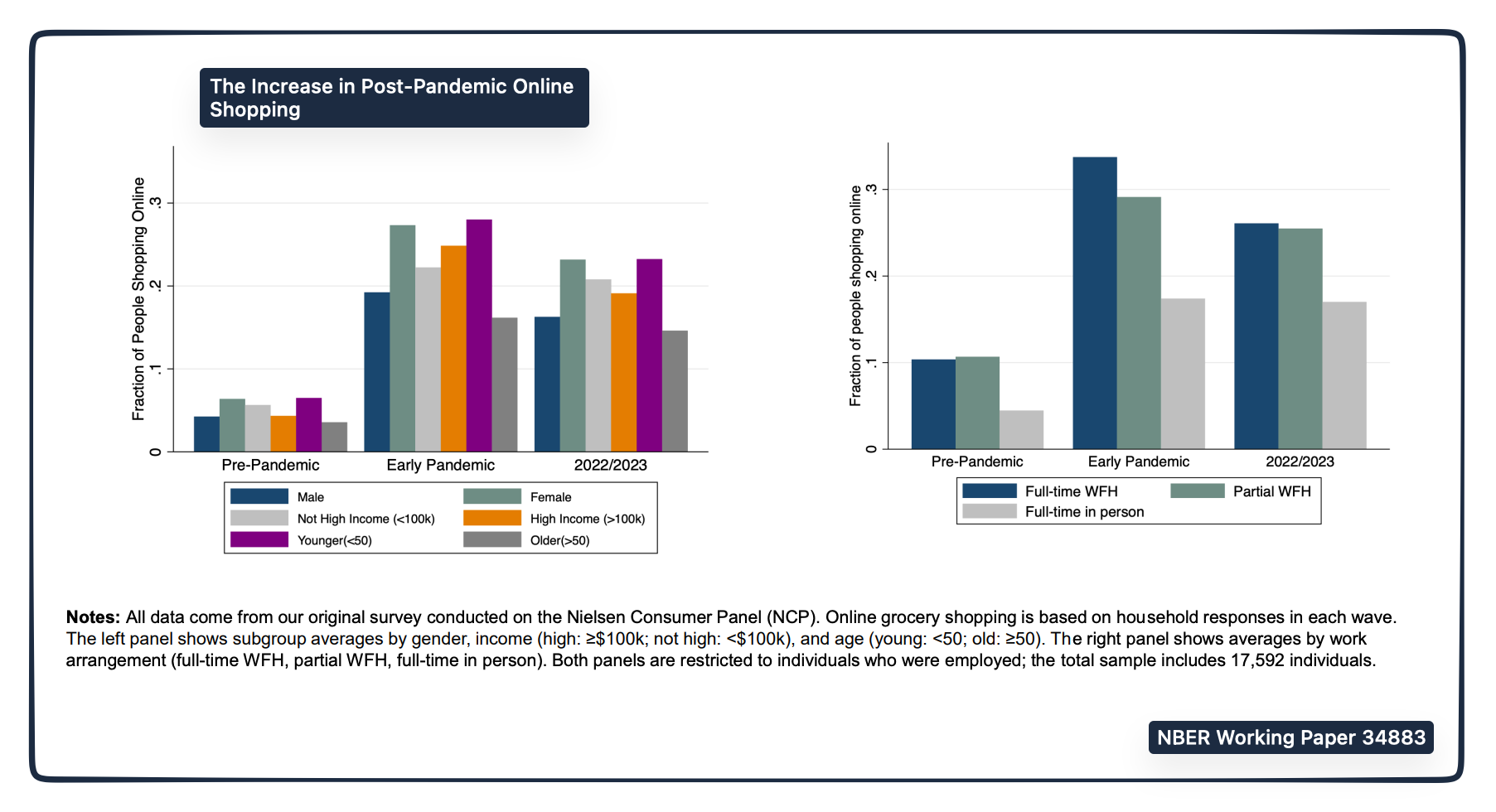

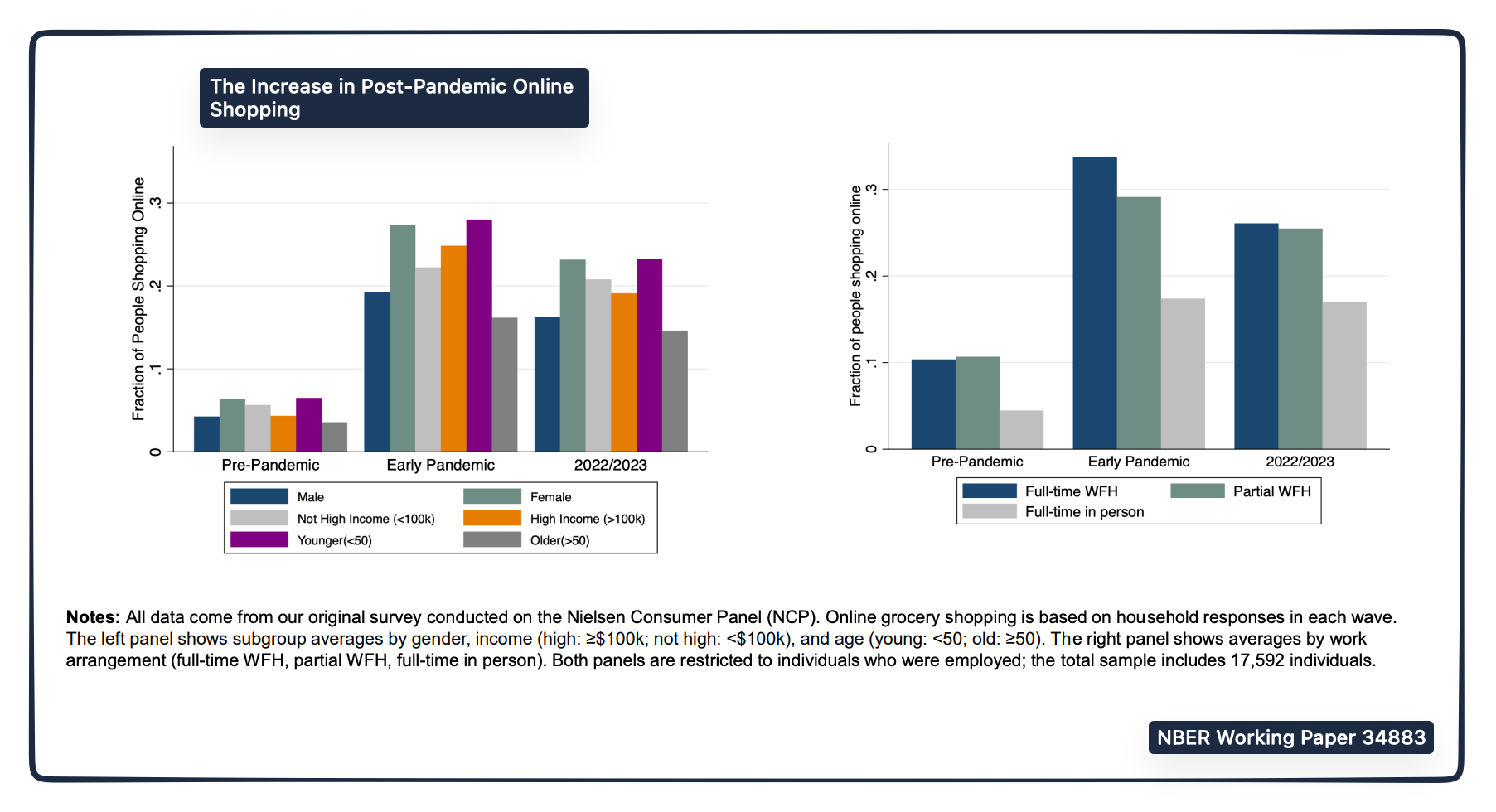

You can see the online increase:

How Much We Spend

The data also indicate that remote worker households spend 1% more on groceries. One reason is that they don’t seem to mind paying higher prices while taking advantage of fewer deals. An economist would say that, caring less about price, they display inelastic behavior.

What We Buy

The data for this study was precise. For example, researchers could distinguish between purchases of 20 oz. bottles of Coca-Cola and 12 packs of cans. As a result, they could be sure that for remote consumers, spending was up for food and general merchandise and down with health and beauty items. Furthermore, they found that the breadth of product selection expanded with more distinct item purchases. Also, when the husband in a married household switched to remote work, he took over more of the shopping, and prices mattered less.

Our Bottom Line: Tradeoffs

Everywhere, you can see that remote workers’ shift in shopping behavior reflects their tradeoffs. Because the opportunity cost of a decision is the sacrificed alternative, I suspect the remote worker’s tradeoffs primarily relate to time. Whether it’s the convenience of online shopping, fewer trips to the store, or less attention to price, the common thread is the extra time the alternative would have required.

My sources and more: Most of today’s facts are from this NBER paper. Then, we found more WFH stats here while econlife explained the increase in dogwalkers.

Nicole Byers is an entertainment enthusiast! Nicole is an entertainment journalist for the Maple Grove Report.